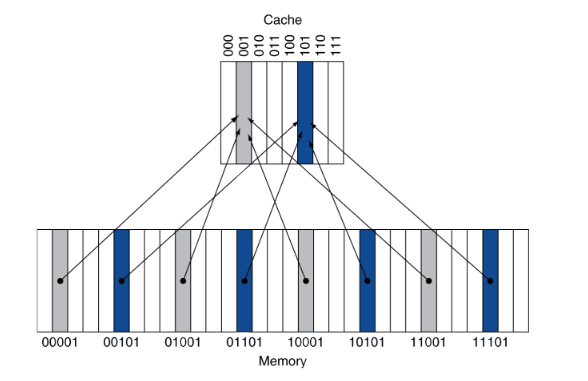

# Pipeline Hazards And Cache --- CS 130 // 2022-11-10 <!--====================================================================--> # Announcements <!-- .slide: data-background="#004477" --> <!--====================================================================--> ## Installing stuff for C programming Before you come to class next week: [install guide](../../resources/installing-visual-studio-code) - install Visual Studio Code (IDE for writing code) - install C/C++ extensions for VSC - install a C compiler - try compiling a C program - do your best to troubleshoot issues - bring any remaining issues to class <!--=====================================================================--> ## Exam 3 has been posted - Due 11/22/2022 (Tuesday before Thanksgiving) - I'm giving you a lot of time - please plan ahead and give yourself time to complete it - I will cancel class some time between now and then to give you time to work on the exam - I still don't know if/when I have to report for jury duty - I will let you know *which day* we have off as soon as I can <!--=====================================================================--> ## Office Hour Adjustments I need to cancel office hours *today* and *next Tuesday* - both cancelations are for very important Drake business - I'm very sorry! - I will try to help as much as I can via email/Teams <!--=====================================================================--> # Review <!-- .slide: data-background="#004477" --> <!--=====================================================================--> ## Review Discussion - what is pipelining? - what is a pipeline hazard? - what are the different types of pipeline hazards? - what are some ways to deal with pipeline hazards? <!--====================================================================--> # Pipeline Hazard Exercises <!-- .slide: data-background="#004477" --> <!--=====================================================================--> ## Data Hazard Exercise - Consider the following MIPS code: ```mips lw $t0, 40($a3) add $t6, $t0, $t2 sw $t6, 40($a3) ``` - <!-- .element: class="fragment"--> Assuming there is no forwarding implemented, are any stalls necessary? - <!-- .element: class="fragment"--> How many clock cycles are required to execute these three lines of code without forwarding? <!----------------------------------> ## Data Hazard Exercise - Consider the following MIPS code: ```mips lw $t0, 40($a3) add $t6, $t0, $t2 sw $t6, 40($a3) ``` - Assuming there IS forwarding implemented, are any stalls necessary? - <!-- .element: class="fragment"--> How many clock cycles are required to execute these three lines of code with forwarding? <!--====================================================================--> # Pipelined CPU Design <!-- .slide: data-background="#004477" --> <!--====================================================================--> ## Pipelined Control Complete <img src='/~manley/CS130/Fall2022/assets/images/COD/pipelined_control_complete.png' height='550'> <!--====================================================================--> ### Pipelined Architecture with Hazard Detection and Forwarding <img src='/~manley/CS130/Fall2022/assets/images/COD/pipelined_architecture_with_forwarding.png' height='550'> <!--====================================================================--> # Caches <!-- .slide: data-background="#004477" --> <!--====================================================================--> ## Memory Organization - When a program uses memory, it tends to use it in *predictable ways* - <!-- .element: class="fragment"--> As a result, it is possible to speed up memory usage dramatically by creating a **memory hierarchy** + <!-- .element: class="fragment"--> Register file is small but it's ridiculously fast + <!-- .element: class="fragment"--> SRAM is larger but slower + <!-- .element: class="fragment"--> DRAM is larger still but even slower + <!-- .element: class="fragment"--> Hard disks are HUGE but also the slowest <!--====================================================================--> ## Terminology - **Cache:** + An auxiliary memory from which high-speed retrieval is possible - <!-- .element: class="fragment"--> **Block:** + A minimum unit of information that can either be present or not present in the cache - <!-- .element: class="fragment"--> **Hit:** + CPU finds what it is looking for in the cache - <!-- .element: class="fragment"--> **Miss:** + CPU doesn't find what it's looking for in the cache <!--====================================================================--> ## Designing a Cache - Having a hierarchy of memories to speed up our computations is great, but we also face several design challenges - <!-- .element: class="fragment"--> If we are looking for a value in memory address `x`, how do we know if it is already in the cache? <!--====================================================================--> ## Direct Mapped Cache - One idea is to use a **direct mapped cache** where the address `$x_n$` tells us where to look in the cache - <!-- .element: class="fragment"--> If there are `n` slots in the cache, then we look for `x` in the `x % n` index of the cache <!----------------------------------> ## Direct Mapped Cache  <!----------------------------------> ## Direct Mapped Cache - If the number of blocks in the cache is `$n = 2^k$`, then `(x % n)` is simply the last `k` bits of `x` + Makes it extremely efficient to find where a block is in the cache - <!-- .element: class="fragment"--> Since multiple blocks may have the same index in the cache, how do we know if the block of memory there is the one we're looking for? + <!-- .element: class="fragment"--> Include a **tag** that uniquely identifies the block <!----------------------------------> ## Direct Mapped Cache - Some parts of the cache can be empty and/or underutilized if their index, by chance, doesn't pop up as often - <!-- .element: class="fragment"--> How can we improve utilization? <!--====================================================================--> ## Fully Associative Cache - The "extreme" alternative to direct mapped caching is **fully associative** caching + Any block can be stored in *any* index of the cache --- - <!-- .element: class="fragment"--> **Advantage**: Every spot in the cache will be used, and therefore less cache misses will occur - <!-- .element: class="fragment"--> **Disadvantage**: We need to search the entire cache every time, so the hit time will increase <!--====================================================================--> ## Set-Associative Cache - The compromise between these approaches is the **set-associative** cache + Blocks are *grouped* into sets of `n` blocks + Block number determines which set - <!-- .element: class="fragment"--> Still requires searching through all `n` blocks in a set - <!-- .element: class="fragment"--> Can be tuned to have a decent balance between hit rate and hit time <!--====================================================================--> <img src='/~manley/CS130/Fall2022/assets/images/COD/cache_comparisons.png' > <!--====================================================================--> ## Handling a Cache Miss 1. Check the cache for a memory address + Results in a miss 2. <!-- .element: class="fragment"--> Fetch corresponding block from RAM or disk + Wait for block to be retrieved (stall) + <!-- .element: class="fragment"--> Write block to cache 3. <!-- .element: class="fragment"--> Continue pipeline (unstall) <!----------------------------------> <img src='/~manley/CS130/Fall2022/assets/images/COD/handling_cache_misses.png' height='550'> <!--====================================================================--> ## Multilevel Caches - Usually, caches are implemented in multiple "levels" for maximal efficiency + <!-- .element: class="fragment"--> L1 is smallest/fastest + <!-- .element: class="fragment"--> L2 is larger/slower + <!-- .element: class="fragment"--> L3 is largest/slowest - <!-- .element: class="fragment"--> Modern multi-core processors typically have dedicated L1 and L2 caches for each core and a shared L3 cache